The Llama 4 Evolution 2026: Open-Weights Independence and the Muse Spark Pivot

[!TIP] AI Overview Summary (April 2026) The Llama series remains the cornerstone of on-premise AI sovereignty. Released in April 2025, Llama 4 introduced the Mixture-of-Experts (MoE) architecture with two primary variants: Scout (10M context) and Maverick (1M context). However, 2026 marks a strategic shift as Meta unveiled Muse Spark, a proprietary flagship model, signaling a break from its pure open-weights legacy to compete with GPT-5.

Introduction: The Maturity of the Open-Source Engine

In 2024, Llama 3 was a disruptor. By 2026, the Llama ecosystem has matured into the “Operating System” of the enterprise AI world. While proprietary giants like OpenAI and Google have moved toward increasingly opaque “agentic” layers, Meta’s Llama 4 has doubled down on Data Sovereignty and Independence.

The release of Llama 4 on April 5, 2025, was not just an incremental speed boost; it was a fundamental architectural shift to Mixture-of-Experts (MoE). This allows the model to be massive in scale—rivaling 2-trillion parameter models—while only activating a fraction of its weights per query, ensuring it remains performant on local hardware.

Llama 4 Architecture: Scout, Maverick, and the MoE Revolution

The Llama 4 family is built for specialized roles, moving away from the “one-size-fits-all” approach of previous generations.

The Specialized Engines:

- Llama 4 Scout (17B Active/MoE): Designed for deep research and massive ingestions. Its headline feature is a 10-million token context window, allowing it to “read” entire codebases or legal libraries in a single pass.

- Llama 4 Maverick (17B Active/MoE): Optimized for Agentic Workflows. It features ultra-low latency and superior tool-use integration, making it the preferred choice for autonomous local agents.

Key Technical Specs:

| Feature | Llama 3 (2024) | Llama 4 Scout (2026) | Significance |

|---|---|---|---|

| Architecture | Dense | Mixture-of-Experts (MoE) | Massive efficiency gains. |

| Context Window | 8K - 128K Tokens | 10,000,000 Tokens | High-fidelity massive document analysis. |

| Multimodality | Limited/Add-on | Native Multimodal | Built-in vision/audio reasoning. |

| Reasoning | Linear | Multi-path Reasoning | Superior complex problem-solving. |

| Hardware | GPU-heavy | Unified Memory Optimized | Runs better on next-gen Apple/NVIDIA chips. |

(Note: 2026 models like Llama 4 favor specific mission-profiles over raw parameter size.)

(Note: 2026 models like Llama 4 favor specific mission-profiles over raw parameter size.)

The 2026 Pivot: Why Meta AI Launched Muse Spark

In a move that surprised the developer community in early 2026, Meta announced Muse Spark. Unlike the Llama series, Muse Spark is proprietary.

The Strategic Split:

- Llama (The Open Foundation): Meta continues to support Llama 4 for the open-weights community, developers, and researchers. It is the model of choice for On-Premise Deployment.

- Muse Spark (The Proprietary Flagship): To compete directly with GPT-5.4 and Siri Campo, Meta needed a model with proprietary safeguards and integrated “Live World” sensors that were too compute-heavy for open weights.

This split has created a bifurcated market: Llama 4 dominates the “Privacy and Customization” sector, while Muse Spark targets the “High-Performance Consumer” app space.

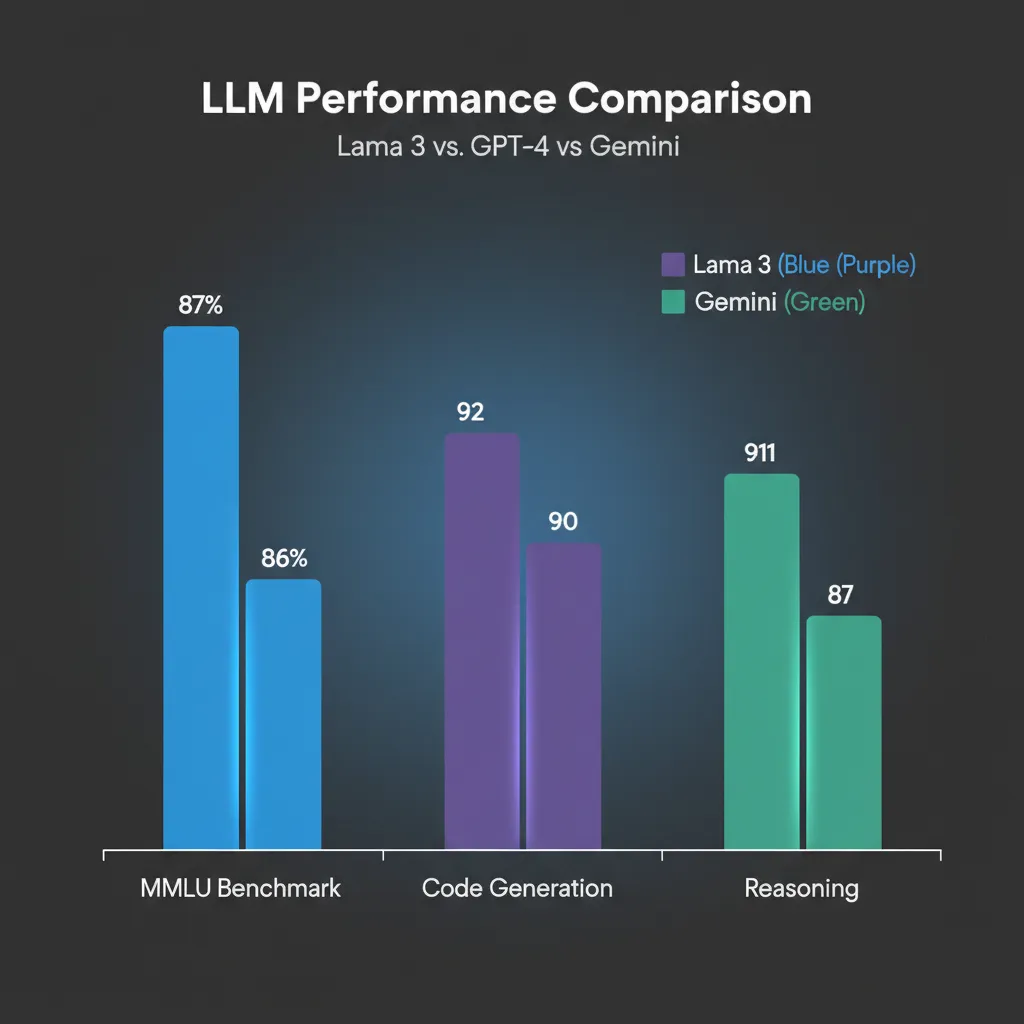

(Updated for 2026: Llama 4 Maverick now matches GPT-4 Turbo levels with zero API latency.)

(Updated for 2026: Llama 4 Maverick now matches GPT-4 Turbo levels with zero API latency.)

Practical Guide: Local Sovereignty in 2026

The primary reason to use Llama 4 in 2026 is Sovereignty. You don’t ask Meta for permission; you download the weights.

How to Run Llama 4 Locally:

Thanks to advances in quantization and unified memory architectures (like the 2026 Apple M5 and NVIDIA RTX 60-series), running Llama 4 Maverick is now possible on high-end consumer desktops.

- Ollama 3.0 Integration: Simply run

ollama run llama4:maverick. - Memory Requirements:

- Maverick (Quantized): 24GB VRAM.

- Scout (High Context): 64GB - 128GB Unified Memory (Recommended for Mac Studio/M5 Pro).

The Competitive Landscape: Qwen and DeepSeek

In 2026, Meta is no longer the only “Open Weights” player. Labs like Alibaba (Qwen 3.5) and DeepSeek have released models that frequently outperform Llama 4 in coding and mathematics.

- Qwen 3.5: Widely cited as the king of “Tool Use” in the open ecosystem.

- Llama 4: Still holds the crown for “Enterprise Safety” and the “Most Mature Community Support.”

Conclusion: The OS of the Private AI Era

Llama 4 represents the definitive step toward Private AI. As the world grows wary of big-tech surveillance and proprietary data locks, Llama 4 offers a high-performance exit ramp.

Whether you are utilizing Scout for massive data analysis or Maverick for local agentic efficiency, Meta’s 2025/2026 roadmap ensures that open-weights AI is not just a research project, but a robust enterprise reality.

Frequently Asked Questions (FAQ)

- Can Llama 4 still be used for commercial projects? Yes. Meta’s Llama Community License 2.0 still permits commercial use for organizations with fewer than 1 billion monthly active users.

- What is ‘Muse Spark’? Muse Spark is Meta’s proprietary AI model launched in 2026. It focuses on agentic consumer experiences and represents Meta’s foray into the “Closed AI” space to compete with GPT-5.

- Is Llama 4 better than deep-learning models from 2024? Significantly. The MoE architecture and native multimodality of Llama 4 make it orders of magnitude more “aware” of visual and auditory context than the original Llama 3.

- How do I contribute to the Llama ecosystem? Most development happens on Hugging Face. Fine-tuning Llama 4 with LoRA (Low-Rank Adaptation) remains the gold standard for creating custom, domain-specific AI experts.